Photo from wikipedia

The biggest difference between video-based action recognition and image-based action recognition is that the former has an extra feature of time dimension. Most methods of action recognition based on deep… Click to show full abstract

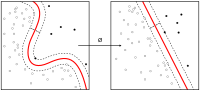

The biggest difference between video-based action recognition and image-based action recognition is that the former has an extra feature of time dimension. Most methods of action recognition based on deep learning adopt: (1) using 3D convolution to modeling the temporal features; (2) introducing an auxiliary temporal feature, such as optical flow. However, the 3D convolution network usually consumes huge computational resources. The extraction of optical flow requires an extra tedious process with an extra space for storage, and is usually modeled for short-range temporal features. To construct the temporal features better, in this paper we propose a multi-scale attention spatial–temporal features network based on SSD, by means of piecewise on long range of the whole video sequence to sparse sampling of video, using the self-attention mechanism to capture the relation between one frame and the sequence of frames sampled on the entire range of video, making the network notice the representative frames on the sequence. Moreover, the attention mechanism is used to assign different weights to the inter-frame relations representing different time scales, so as to reasoning the contextual relations of actions in the time dimension. Our proposed method achieves competitive performance on two commonly used datasets: UCF101 and HMDB51.

Journal Title: Multimedia Systems

Year Published: 2021

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!