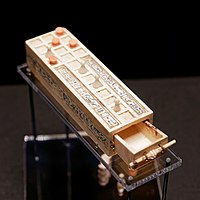

Photo from wikipedia

Adaptive dynamic programming (ADP) is an important branch of reinforcement learning to solve various optimal control issues. Most practical nonlinear systems are controlled by more than one controller. Each controller… Click to show full abstract

Adaptive dynamic programming (ADP) is an important branch of reinforcement learning to solve various optimal control issues. Most practical nonlinear systems are controlled by more than one controller. Each controller is a player, and to make a tradeoff between cooperation and conflict of these players can be viewed as a game. Multi-player games are divided into two main categories: zero-sum game and non-zero-sum game. To obtain the optimal control policy for each player, one needs to solve Hamilton–Jacobi–Isaacs equations for zero-sum games and a set of coupled Hamilton–Jacobi equations for non-zero-sum games. Unfortunately, these equations are generally difficult or even impossible to be solved analytically. To overcome this bottleneck, two ADP methods, including a modified gradient-descent-based online algorithm and a novel iterative offline learning approach, are proposed in this paper. Furthermore, to implement the proposed methods, we employ single-network structure, which obviously reduces computation burden compared with traditional multiple-network architecture. Simulation results demonstrate the effectiveness of our schemes.

Journal Title: Artificial Intelligence Review

Year Published: 2017

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!