Photo from wikipedia

Multi-objective reinforcement learning (MORL) algorithms aim to approximate the Pareto frontier uniformly in multi-objective decision making problems. In the scenario of deep reinforcement learning (RL), gradient-based methods are often adopted… Click to show full abstract

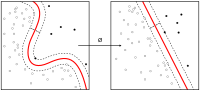

Multi-objective reinforcement learning (MORL) algorithms aim to approximate the Pareto frontier uniformly in multi-objective decision making problems. In the scenario of deep reinforcement learning (RL), gradient-based methods are often adopted to learn deep policies/value functions due to the fast convergence speed, while pure gradient-based methods can not guarantee a uniformly approximated Pareto frontier. On the other side, evolution strategies straightly manipulate in the solution space to achieve a well-distributed Pareto frontier, but applying evolution strategies to optimize deep networks is still a challenging topic. To leverage the advantages of both kinds of methods, we propose a two-stage MORL framework combining a gradient-based method and an evolution strategy. First, an efficient multi-policy soft actor-critic algorithm is proposed to learn multiple policies collaboratively. The lower layers of all policy networks are shared. The first-stage learning can be regarded as representation learning. Secondly, the multi-objective covariance matrix adaptation evolution strategy (MO-CMA-ES) is applied to fine-tune policy-independent parameters to approach a dense and uniform estimation of the Pareto frontier. Experimental results on three benchmarks (Deep Sea Treasure, Adaptive Streaming, and Super Mario Bros) show the superiority of the proposed method.

Journal Title: Applied Intelligence

Year Published: 2020

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!