Photo from wikipedia

Neural networks are getting wider and deeper to achieve state-of-the-art results in various machine learning domains. Such networks result in complex structures, high model size, and computational costs. Moreover, these… Click to show full abstract

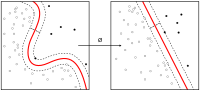

Neural networks are getting wider and deeper to achieve state-of-the-art results in various machine learning domains. Such networks result in complex structures, high model size, and computational costs. Moreover, these networks are failing to adapt to new data due to their isolation in the specific domain-target space. To tackle these issues, we propose a sparse learning method to train the existing network on new classes by selecting non-crucial parameters from the network. Sparse learning also manages to keep the performance of existing classes with no additional network structure and memory costs by employing an effective node selection technique, which analyzes and selects unimportant parameters by using information theory in the neuron distribution of the fully connected layers. Our method could learn up to 40% novel classes without notable loss in the accuracy of existing classes. Through experiments, we show how a sparse learning method competes with state-of-the-art methods in terms of accuracy and even surpasses the performance of related algorithms in terms of efficiency in memory, processing speed, and overall training time. Importantly, our method can be implemented in both small and large applications, and we justify this by using well-known networks such as LeNet, AlexNet, and VGG-16.

Journal Title: Applied Intelligence

Year Published: 2020

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!