Photo from wikipedia

This study proposes an approximate parametric model-based Bayesian reinforcement learning approach for robots, based on online Bayesian estimation and online planning for an estimated model. The proposed approach is designed… Click to show full abstract

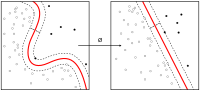

This study proposes an approximate parametric model-based Bayesian reinforcement learning approach for robots, based on online Bayesian estimation and online planning for an estimated model. The proposed approach is designed to learn a robotic task with a few real-world samples and to be robust against model uncertainty, within feasible computational resources. The proposed approach employs two-stage modeling, which is composed of (1) a parametric differential equation model with a few parameters based on prior knowledge such as equations of motion, and (2) a parametric model that interpolates a finite number of transition probability models for online estimation and planning. The proposed approach modifies the online Bayesian estimation to be robust against approximation errors of the parametric model to a real plant. The policy planned for the interpolating model is proven to have a form of theoretical robustness. Numerical simulation and hardware experiments of a planar peg-in-hole task demonstrate the effectiveness of the proposed approach.

Journal Title: Autonomous Robots

Year Published: 2020

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!