Photo from wikipedia

Abstract Scene labeling or parsing aims to assign pixelwise semantic labels for an input image. Existing CNN-based models cannot leverage the label dependencies, while RNN-based models predict labels within the… Click to show full abstract

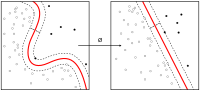

Abstract Scene labeling or parsing aims to assign pixelwise semantic labels for an input image. Existing CNN-based models cannot leverage the label dependencies, while RNN-based models predict labels within the local context. In this paper, we propose a fast LSTM scene labeling network via structural inference. A minimum spanning tree is used to build the image structure for constructing semantic relationships. This structure allows efficient generation of direct parent-child dependencies for arbitrary levels of superpixels, and thus structural relationships can be learned with LSTM. In particular, we propose a bi-directional recurrent network to model the information flow along the parent-child path. In this way, the recurrent units in both coarse and fine levels can mutually transfer the global and local context information in the entire image structure. The proposed network is extremely fast, and it is 2.5 × faster than the state-of-the-art RNN-based models. Extensive experiments demonstrate that the proposed method provides a significant improvement in learning the label dependencies, and it outperforms state-of-the-art methods on different benchmarks.

Journal Title: Neurocomputing

Year Published: 2021

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!