Photo from wikipedia

The human visual ability to recognize objects and scenes is widely thought to rely on representations in category-selective regions of visual cortex. These representations could support object vision by specifically… Click to show full abstract

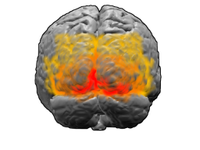

The human visual ability to recognize objects and scenes is widely thought to rely on representations in category-selective regions of visual cortex. These representations could support object vision by specifically representing objects, or, more simply, by representing complex visual features regardless of the particular spatial arrangement needed to constitute real world objects. That is, by representing visual textures. To discriminate between these hypotheses, we leveraged an image synthesis approach that, unlike previous methods, provides independent control over the complexity and spatial arrangement of visual features. We found that human observers could easily detect a natural object among synthetic images with similar complex features that were spatially scrambled. However, observer models built from BOLD responses from category-selective regions, as well as a model of macaque inferotemporal cortex and Imagenet-trained deep convolutional neural networks, were all unable to identify the real object. This inability was not due to a lack of signal-to-noise, as all of these observer models could predict human performance in image categorization tasks. How then might these texture-like representations in category-selective regions support object perception? An image-specific readout from category-selective cortex yielded a representation that was more selective for natural feature arrangement, showing that the information necessary for object discrimination is available. Thus, our results suggest that the role of human category-selective visual cortex is not to explicitly encode objects but rather to provide a basis set of texture-like features that can be infinitely reconfigured to flexibly learn and identify new object categories. Significance Statement Virtually indistinguishable metamers of visual textures, such as wood grain, can be synthesized by matching complex features regardless of their spatial arrangement (1–3). However, humans are not fooled by such synthetic images of scrambled objects. Thus, category-selective regions of human visual cortex might be expected to exhibit representational geometry preferentially sensitive to natural objects. Contrarily, we demonstrate that observer models based on category-selective regions, models of macaque inferotemporal cortex and Imagenet-trained deep convolutional neural networks do not preferentially represent natural images, even while they are able to discriminate image categories. This suggests the need to reconceptualize the role of category-selective cortex as representing a basis set of complex texture-like features, useful for a myriad of visual behaviors.

Journal Title: Proceedings of the National Academy of Sciences of the United States of America

Year Published: 2022

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!