Photo from wikipedia

In this paper, we propose MiT: a novel multi-view transformer model1 for 3D/4D facial affect recognition. MiT incorporates patch and position embeddings from various patches of multi-views and uses them… Click to show full abstract

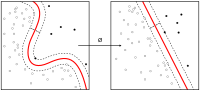

In this paper, we propose MiT: a novel multi-view transformer model1 for 3D/4D facial affect recognition. MiT incorporates patch and position embeddings from various patches of multi-views and uses them for learning various facial muscle movements to showcase an effective recognition performance. We also propose a multi-view loss function that is not only gradient-friendly, and hence speeds up the gradient computation during back-propagation, but it also leverages the correlation associated with the underlying facial patterns among multi-views. Additionally, we offer multi-view weights that are trainable and learnable, and help substantially in training. Finally, we equip our model with distributed performance for faster learning and computational convenience. With the help of extensive experiments, we show that our model outperform the existing methods on widely-used datasets for 3D/4D FER.

Journal Title: IEEE Signal Processing Letters

Year Published: 2021

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!