Photo from wikipedia

With the development of deep neural networks, multi-channel speech separation techniques with fixed array geometries have achieved remarkable performance. However, distributed microphone array processing remains a challenging problem because it… Click to show full abstract

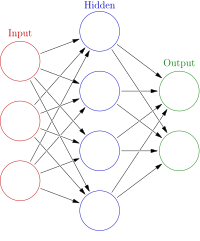

With the development of deep neural networks, multi-channel speech separation techniques with fixed array geometries have achieved remarkable performance. However, distributed microphone array processing remains a challenging problem because it requires the network to be able to process inputs with varying dimensions. To address this problem, we propose a triple-path recurrent neural network (TPRNN) with multi-scale aggregation blocks for distributed microphone array multi-channel speech separation. First, we extend the single-channel dual-path recurrent neural network by additionally adding multi-scale aggregation blocks and adaptive feature fusion blocks. Next, a third path along the spatial dimension is introduced to model spatial information. By this means, TPRNN can alternately and iteratively perform inter-channel, intra-chunk, and inter-chunk modeling. The experimental results show that the proposed approach outperforms other advanced baselines for multi-channel speech separation and enhancement tasks using spatially distributed microphones.

Journal Title: IEEE Signal Processing Letters

Year Published: 2022

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!