Photo from wikipedia

Stochastic dynamic teams and games are rich models for decentralized systems and challenging testing grounds for multi-agent learning. Several algorithms exist for stochastic games, some with guarantees of convergence to… Click to show full abstract

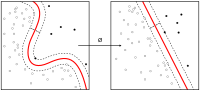

Stochastic dynamic teams and games are rich models for decentralized systems and challenging testing grounds for multi-agent learning. Several algorithms exist for stochastic games, some with guarantees of convergence to equilibrium. However, there may be high cost suboptimal equilibria in cooperative games where team optimality is the goal. Previous work that guarantees team optimality assumes stateless dynamics, or an explicit coordination mechanism, or joint-control sharing. In this paper, we present an algorithm with guarantees of convergence to team optimal policies in teams and common interest games. The algorithm is a two-timescale method that uses a variant of Q-learning on the finer timescale to perform policy evaluation while exploring the policy space on the coarser timescale. Agents following this algorithm are "independent learners": they use only local controls, local cost realizations, and global state information, without access to controls of other agents. The results presented here are the first, to our knowledge, to give formal guarantees of convergence to team optimality using independent learners in stochastic dynamic teams and common interest games.

Journal Title: IEEE Transactions on Automatic Control

Year Published: 2021

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!