Photo from wikipedia

Deep learning training involves a large number of operations, which are dominated by high dimensionality Matrix-Vector Multiplies (MVMs). This has motivated hardware accelerators to enhance compute efficiency, but where data… Click to show full abstract

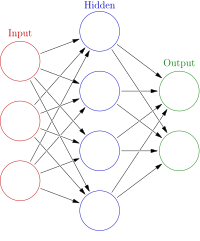

Deep learning training involves a large number of operations, which are dominated by high dimensionality Matrix-Vector Multiplies (MVMs). This has motivated hardware accelerators to enhance compute efficiency, but where data movement and accessing are proving to be key bottlenecks. In-Memory Computing (IMC) is an approach with the potential to overcome this, whereby computations are performed in-place within dense 2-D memory. However, IMC fundamentally trades efficiency and throughput gains for dynamic-range limitations, raising distinct challenges for training, where compute precision requirements are seen to be substantially higher than for inferencing. This paper explores training on IMC hardware by leveraging two recent developments: (1) a training algorithm enabling aggressive quantization through a radix-4 number representation; (2) IMC leveraging compute based on precision capacitors, whereby analog noise effects can be made well below quantization effects. Energy modeling calibrated to a measured silicon prototype implemented in 16 nm CMOS shows that energy savings of over $400\times $ can be achieved with full quantizer adaptability, where all training MVMs can be mapped to IMC, and $3\times $ can be achieved for two-level quantizer adaptability, where two of the three training MVMs can be mapped to IMC.

Journal Title: IEEE Transactions on Circuits and Systems I: Regular Papers

Year Published: 2022

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!