Photo from wikipedia

In class-incremental semantic segmentation, we have no access to the labeled data of previous tasks. Therefore, when incrementally learning new classes, deep neural networks suffer from catastrophic forgetting of previously… Click to show full abstract

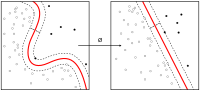

In class-incremental semantic segmentation, we have no access to the labeled data of previous tasks. Therefore, when incrementally learning new classes, deep neural networks suffer from catastrophic forgetting of previously learned knowledge. To address this problem, we propose to apply a self-training approach that leverages unlabeled data, which is used for rehearsal of previous knowledge. Specifically, we first learn a temporary model for the current task, and then, pseudo labels for the unlabeled data are computed by fusing information from the old model of the previous task and the current temporary model. In addition, conflict reduction is proposed to resolve the conflicts of pseudo labels generated from both the old and temporary models. We show that maximizing self-entropy can further improve results by smoothing the overconfident predictions. Interestingly, in the experiments, we show that the auxiliary data can be different from the training data and that even general-purpose, but diverse auxiliary data can lead to large performance gains. The experiments demonstrate the state-of-the-art results: obtaining a relative gain of up to 114% on Pascal-VOC 2012 and 8.5% on the more challenging ADE20K compared to previous state-of-the-art methods.

Journal Title: IEEE transactions on neural networks and learning systems

Year Published: 2022

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!