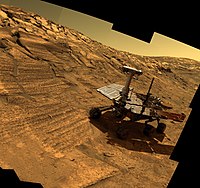

Photo from wikipedia

Light detection and ranging (LiDAR) odometry plays a crucial role in autonomous mobile robots and unmanned ground vehicles (UGVs). This paper presents a deep learning–based odometry system using two successive… Click to show full abstract

Light detection and ranging (LiDAR) odometry plays a crucial role in autonomous mobile robots and unmanned ground vehicles (UGVs). This paper presents a deep learning–based odometry system using two successive three-dimensional (3D) point clouds to estimate their scene flow and then predict their relative pose. The network consumes continuous 3D point clouds directly and outputs their scene flow and uncertain mask in a coarse-to-fine fashion. A pose estimation layer without trainable parameters is designed to compute the pose with the scene flow. We also introduce a scan-to-map optimization algorithm to enhance the robustness and accuracy of the system. Our experiments on the KITTI odometry data set and our campus data set demonstrate the effectiveness of the proposed deep learning–based point cloud odometry.

Journal Title: Transactions of the Institute of Measurement and Control

Year Published: 2022

Link to full text (if available)

Share on Social Media: Sign Up to like & get

recommendations!